May, 2026 | Updates

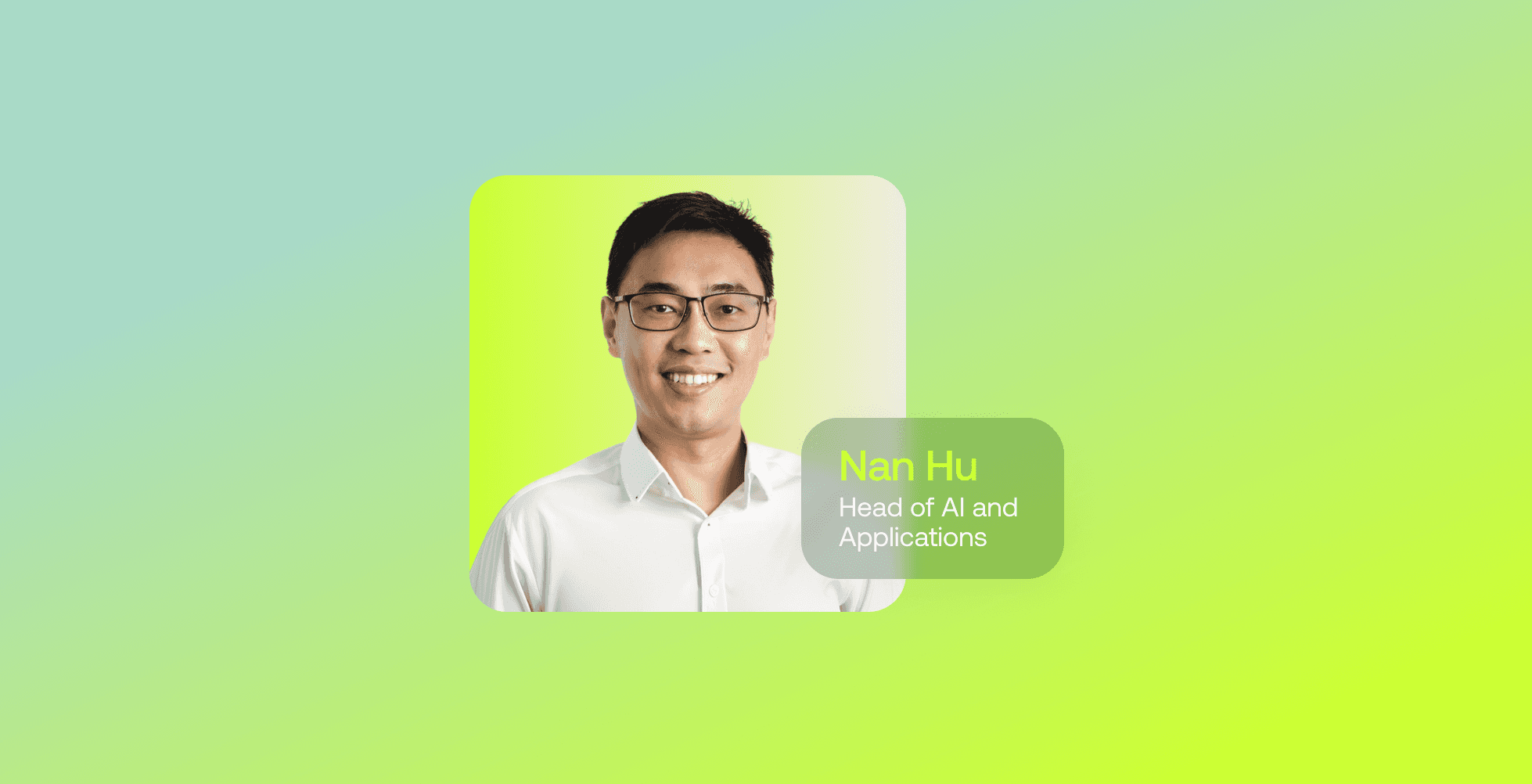

The New AI Stack: Nan Hu on Model-to-Grid

In this interview, Nan Hu shares insights on designing modern AI infrastructure, the shift from MaxP to MaxQ, and why efficiency, not scale alone, will define the next generation of AI systems.

The conversation around infrastructure is shifting. GPU-powered systems remain at the centre of this, increasingly shaped by platforms like NVIDIA’s AI ecosystem, and new approaches to how compute interacts with power.

Nan Hu, Head of AI & Apps at Firmus, works at this intersection. Leading the development of AI FactoryOS, he collaborates across infrastructure and platform teams to operationalise Firmus’ Model-to-Grid philosophy, an approach that treats AI workloads, hardware, and energy systems as a single optimisation problem. From real-time telemetry to digital twins powered by NVIDIA Omniverse, his team is helping redefine how AI factories are built and run.

Building the Model-to-Grid AI stack

Hu heads the AI & Apps team at Firmus, where he leads the development of the software stack behind AI FactoryOS in close collaboration with the Infrastructure and Platform teams. His team focuses on advancing Firmus’ Model-to-Grid philosophy through agentic operations, workload scheduling, and power smoothing for the latest generations of NVIDIA systems such as the GB300.

This deep integration within the NVIDIA DSX blueprint ecosystem enables optimisation across the full stack, from the neural network layer through to the power grid.

Turning telemetry into real-time intelligence

A major challenge in modern AI infrastructure is converting system-level data into actionable insight in real time. Hu describes one of his biggest opportunities as mastering the integration of high-fidelity telemetry from NVIDIA data centre systems such as DCGM, Redfish, and Baseboard Management Controllers (tools that provide real-time visibility into GPU performance and system health).

Drawing on modelling-simulation-validation-optimisation loops from his experience with earlier smart city projects, his team is now applying these principles to AI factories using NVIDIA Omniverse and the DSX blueprint. With today’s scale of compute, these digital twins can now operate as real-time engines rather than offline planning tools.

Where AI infrastructure strategies fall short

As AI workloads scale, many organisations are still building infrastructure on outdated assumptions. Hu points to a fundamental shift from ‘MaxP’ (maximum power capacity) to ‘MaxQ’ (maximising tokens per watt). At large scale, AI workloads can introduce significant grid instability, making one-size-fits-all infrastructure increasingly unworkable.

“At Firmus, we treat the entire stack, from model architecture to grid constraints, as a continuous optimisation problem.”

RAG, agentic systems, and production reality

While agentic AI systems are gaining traction, their effectiveness depends heavily on how they are deployed. Hu notes that as LLMs expand their context windows, traditional RAG is evolving into memory-backed, agentic architectures that offer greater autonomy and control. However, he emphasises the importance of applying AI selectively, with rigorous evaluation frameworks guiding production use.

In critical infrastructure scenarios, such as liquid cooling leakage detection, deterministic, rule-based hardware sensors remain essential, as latency and reliability are non-negotiable.

Designing teams across AI and infrastructure

Bridging AI research and infrastructure engineering requires rethinking traditional team structures. Hu describes Firmus’ approach as intentionally hybrid, combining expertise in Slurm and Kubernetes scheduling with research capabilities in model and kernel-level execution and thermal dynamics. This cross-functional model enables tighter alignment between workload design and power behaviour.

The result is the ability to map power profiles to workload templates and optimise efficiency across both hardware and software layers.

Rethinking what it means to scale AI

“The belief that AI infrastructure is purely a capital deployment game of hoarding GPUs is fundamentally flawed.”

Despite ongoing investment in GPUs, Hu challenges the idea that AI infrastructure is simply a capital deployment problem. Instead, he argues that the rapid growth of inference workloads is shifting the focus toward operational efficiency and energy responsibility as key differentiators. At Firmus, the approach demonstrates how AI systems can scale while also supporting and stabilising the energy grid.

Hu’s perspective reflects a broader transition in AI infrastructure: from brute-force scaling to coordinated optimisation across compute and energy systems. As AI factories evolve, approaches like Model-to-Grid highlight a future where performance, efficiency, and sustainability are deeply interconnected.